The Rise of Multi-Agent Enterprise Architecture

Protocols, revenue workflows, and governance realities behind the multi-agent enterprise shift.

The Conversation That Keeps Going

Executives hear “Agentforce” or “multi-agent architecture” and imagine autonomous digital workers closing deals. Engineers hear the same terms and start debating protocol specifications, tool invocation, and orchestration runtimes. Both groups often leave the conversation assuming they are aligned. In many cases, they are not.

From my perspective, the more interesting shift is not the hype around digital coworkers. It is the possibility that enterprise software may gradually move from systems that primarily store and route data to systems that can help coordinate decisions and actions through specialized AI agents. A lead qualification agent. A forecasting agent. A pricing agent. A renewal agent. Each with a bounded role, its own reasoning loop, and the ability to interact with tools, systems, and potentially other agents.

I do not see this as a simple feature release. I see it as a possible architectural direction that deserves more careful evaluation. If organizations approach it as just another AI rollout, they may underestimate the importance of governance, data quality, and trust boundaries.

What follows is my attempt to make sense of this shift through the lenses of protocols, workflows, governance, and implementation. I am writing this less as a definitive industry verdict and more as a practical perspective on what this evolution could look like inside revenue organizations.

Why Now, and Why It Feels More Practical Than Before

Multi-agent systems as a concept are not new. Researchers were studying autonomous agents that coordinate and compete long before modern LLMs entered the enterprise conversation. What seems to have changed between 2022 and 2026 is not the idea itself, but the practicality of implementing it.

Large language models have become a usable reasoning and language layer. That makes it more feasible to build systems that can interpret messy enterprise context, plan sequences of actions, and interact with both humans and software systems in more flexible ways than earlier automation tools allowed.

Enterprise platforms have responded quickly. Salesforce’s Agentforce, for example, frames agents as requiring three ingredients: data, reasoning, and actions. I think that framing is useful because it captures why the current moment feels different. Models are improving, enterprise platforms are exposing actions through more standardized interfaces, and governance conversations around AI are becoming more concrete.

To me, the bigger catalyst is not any single vendor product. It is the growing interest in standardization. If enterprises are going to work with multiple agents across multiple systems, the real challenge becomes how to reduce the integration burden. That is where the protocol conversation starts to matter.

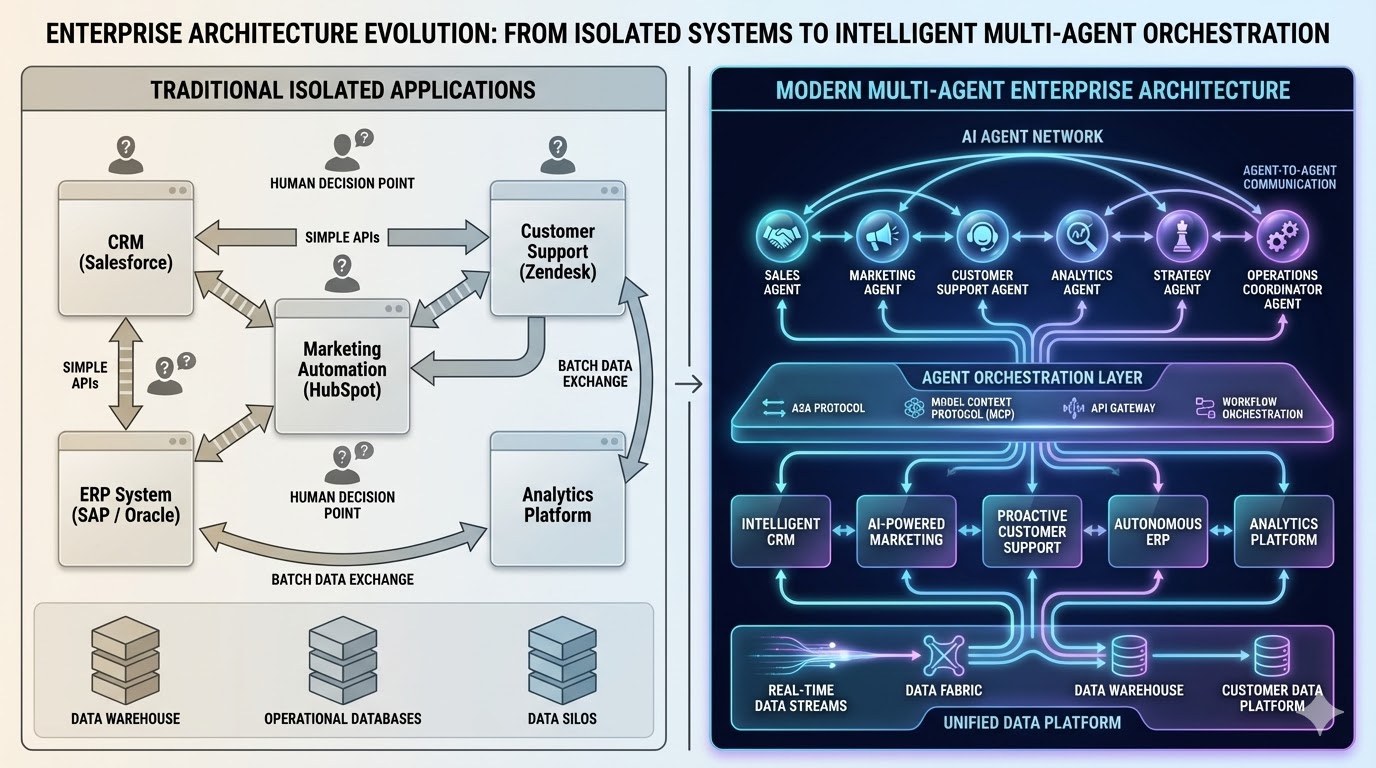

Siloed systems connected by batch APIs and human routing on the left. Coordinated agent networks with standardized protocols and unified data on the right. The transition between the two may be where many organizations are still experimenting.

Siloed systems connected by batch APIs and human routing on the left. Coordinated agent networks with standardized protocols and unified data on the right. The transition between the two may be where many organizations are still experimenting.

The Protocol Landscape: A2A, MCP, and What Actually Matters

In this context, a protocol is not a product. It is a shared contract for how systems exchange messages, manage identity, expose capabilities, and handle failures. That may sound technical, but it becomes highly relevant once multiple agents and tools are involved. Without those shared contracts, every integration becomes custom, brittle, and difficult to govern.

Two protocol families stand out in current discussions.

Agent2Agent (A2A): Cross-Agent Interoperability

A2A is an open protocol, initially championed by Google Cloud with an expanding ecosystem around it, that aims to support how agents discover each other, negotiate interactions, and collaborate on tasks while keeping their internal implementations opaque.

A practical example might be a revenue-side agent that needs to interact with a finance-side agent for pricing approval. Rather than hardcoding a bespoke interaction, A2A proposes a shared pattern for that handoff.

Three ideas are especially useful here.

Capability discovery happens through an Agent Card, which is essentially a machine-readable description of what an agent can do, what interfaces it supports, and what security it requires.

Task management gives the interaction lifecycle more structure, especially for work that may take time or involve multiple states.

Collaboration messages define how content such as text, structured payloads, or files moves between agents, including patterns for streaming and asynchronous completion.

In my view, A2A becomes interesting when organizations need interoperability across teams, platforms, or vendors. That said, it also introduces meaningful risks. If external agents are treated too casually as trusted collaborators, the architecture can create opportunities for privilege escalation or confused-deputy patterns. Version drift is another concern. An advertised capability may no longer align with actual runtime behavior after model or configuration changes.

So while A2A is promising, I would treat it less as a plug-and-play answer and more as an interoperability layer that still requires strong governance.

Model Context Protocol (MCP): Tool and Data Access

If A2A is about agent-to-agent interaction, MCP is easier to think of as agent-to-tools and agent-to-context access.

MCP, which originated with Anthropic and has since become more broadly discussed, aims to standardize how AI applications connect to external tools and data sources. In practical terms, that could mean giving an agent structured access to a CRM query, a data warehouse lookup, a workflow trigger, or a messaging action.

MCP servers expose three broad types of capabilities:

Resources, which are readable data items or records

Tools, which are callable operations with structured input and output definitions

Prompts, which are reusable interaction templates or patterns

I find MCP useful as a concept because it reduces the need to treat every tool integration as a one-off design problem. It introduces a more consistent way to think about what an agent can access and how that access is described.

But the governance concerns are equally important. If agents can access powerful tools, then poor access design or prompt injection risks become much more serious. A model that reasons incorrectly is one problem. A model that reasons incorrectly and then takes action through a tool is a very different one.

Putting the Layers Together

One pattern that seems useful to me is to separate these layers conceptually.

- MCP for exposing tools and context

- A2A for delegating work to external agents that own their own reasoning loops

- Tool calling for the model-level interface that turns model intent into structured actions

- Agent runtimes for coordinating workflow logic, retries, approvals, and verification

I do not see these as competing patterns. I see them as complementary layers that may coexist in a more mature enterprise architecture.

Revenue Ops: Where This Gets Concrete

The area where this architecture becomes most interesting to me is Revenue Operations.

RevOps already sits at the intersection of CRM data, marketing systems, analytics, forecasting, process design, and cross-functional execution. Because of that, it seems like one of the more natural places to explore agent-based coordination.

I think the important distinction here is that multi-agent architecture is not just about generating more insights. It is about connecting signals to governed action.

Lead-to-Meeting

One possible workflow is a lead-to-meeting motion where a marketing agent classifies inbound leads using campaign data, web behavior, and enrichment tools. A routing agent evaluates ownership rules and SLA logic. An outreach agent drafts messaging. A scheduling agent handles booking. A RevOps audit agent validates that required fields and attribution logic are intact before pipeline progression happens.

I am not suggesting every organization should implement this exact flow. But as an architecture thought experiment, it illustrates where bounded agents could add value. The emphasis is on coordination, not replacing every human touchpoint.

Pipeline Risk Triage

This is another area where the architecture starts to feel practical.

A conversation intelligence agent could extract objections, timeline slips, or budget concerns from calls and emails. A forecast-oriented agent could interpret those signals alongside opportunity history. A CRM hygiene agent could standardize notes and enforce stage-entry requirements. A manager-facing agent could prepare inspection summaries or review prompts.

None of this requires assuming full autonomy. Even a partially agent-assisted workflow could improve consistency and reduce the operational lag between signal detection and action planning.

Renewal and Expansion

Renewals and expansions are interesting because the relevant context often lives outside the CRM. Product usage, support history, invoices, customer health indicators, and account context all matter.

A churn-risk agent might detect usage decline or unresolved support issues. A success-play agent could prepare recommended next steps. A growth-oriented agent might surface expansion signals. A governance-oriented layer could ensure the recommended actions are consistent with privacy, consent, and account policy boundaries.

To me, this is where the conversation becomes less about flashy AI and more about architecture. The question is not whether an agent can draft a message. The question is whether the surrounding system is mature enough to support coordinated, reliable, and auditable action.

Walking Through a Deal-Risk Rescue Play

If I were to map a deal-risk rescue flow conceptually, it might look like this:

A sales call ends. A conversation intelligence agent extracts risks and commitments. A forecast agent recalculates risk and updates scenario projections. If the risk threshold is crossed, a coordinator agent creates a structured rescue plan. That coordinator may call an external pricing or deal desk agent through A2A, while also invoking MCP-exposed tools to update CRM fields and schedule follow-up actions. An audit agent logs the sequence of decisions and evidence.

That kind of flow is not important because it proves agents can do everything. It is important because it shows how multiple layers, protocols, and approval gates could work together in a real business context.

Governance: The Part That Likely Determines Whether This Works

This is the section I find most important, because governance seems to be the difference between a compelling demo and a sustainable operating model.

Data Quality Is Now Agent Safety

One conclusion I keep coming back to is this: if agents are allowed to act on enterprise data, then data quality stops being just a reporting problem. It becomes a safety problem.

In a traditional workflow, an incorrect close date might distort a report until someone notices. In an agent-assisted workflow, that same close date might trigger a review, reprioritize work, or influence a pricing or forecasting decision automatically.

That changes the stakes.

So if organizations want to explore agent-based workflows seriously, I think they need stronger data contracts around key objects such as Lead, Contact, Account, Opportunity, and Renewal. Stage definitions, close-date semantics, and next-step conventions need to become more explicit than many teams are used to.

Prompt Injection and Privilege Escalation

The second governance concern is control over action.

Prompt injection and excessive agency are already well-documented risks in LLM systems. In a multi-agent environment, those risks can become more subtle because the system boundary is wider. A lower-privileged agent interacting with untrusted content could influence a higher-privileged pathway if role separation is weak.

That is why I think the separation between “reader” agents and “writer” agents is an important architectural idea. Agents that ingest transcripts, emails, or web content should not necessarily be the same agents that can send messages, approve discounts, or modify records.

What Good Governance Might Look Like

From my perspective, good governance in an agentic revenue architecture would include:

- scoped identities and least-privilege access

- traceability for every tool call and delegation event

- approval gates for high-impact actions

- constrained defaults rather than permissive defaults

- continuous monitoring of behavior, not just one-time setup

In other words, the governance model should grow with the autonomy model.

A Realistic Implementation Roadmap

If I were thinking about this as a phased adoption path for a RevOps team, I would not start with full autonomy. I would start with visibility, bounded experimentation, and measured expansion.

Phase 1: Readiness and Scope

Start with one or two workflows such as pipeline inspection or lead triage. Define semantic expectations for the objects involved. Identify where human approvals are mandatory. Capture baseline metrics and risks.

The purpose of this phase is not to launch agents quickly. It is to understand whether the underlying process and data are stable enough to support them.

Phase 2: Tool and Context Foundation

Set up tool and context access for read-heavy operations first. CRM reads, enrichment lookups, reporting access, and forecast retrieval are safer starting points than immediate write actions.

This is where MCP-style thinking becomes useful. The focus is on controlled access, logging, and evaluation.

Phase 3: Controlled Action Pilot

Only after the read path is reliable would I consider bounded write actions such as note updates, task creation, or structured field updates. Even then, approval gates and rollback mechanisms should remain central.

This phase is where organizations are likely to discover whether their assumptions about data quality and process consistency were realistic.

Phase 4: Cross-Agent Orchestration

Once the internal foundation is stable, then external delegation patterns become more plausible. This is where A2A-type orchestration could start to matter, especially for workflows that cross into finance, service management, or procurement.

But I would treat this as earned complexity, not a starting point.

Phase 5: Production Hardening

Only after repeated validation would it make sense to widen scope, formalize monitoring, and align governance with broader frameworks such as NIST AI RMF or ISO/IEC 42001.

The long-term question is not simply whether agents can be deployed. It is whether they can be trusted operationally.

Where This Lands for Me

I do not see multi-agent enterprise architecture as a switch that organizations turn on. I see it more as an architectural direction that may reshape how revenue systems are designed and operated over time.

The enabling pieces are becoming clearer. A2A offers a path for agent-to-agent interoperability. MCP offers a more standardized way to expose tools and context. Platform-native runtimes make orchestration more feasible than it was a few years ago.

But the harder work still seems organizational. Clean data contracts. Clear semantics. Scoped access. Approval models. Trust boundaries. Those are the foundations that will likely determine whether this becomes a durable operating model or just another overextended AI experiment.

My view is simple: start with visibility, move toward bounded autonomy, and treat orchestration as something that must be earned through discipline.

If this space matures the way many expect, the biggest advantage may not come from having more AI features. It may come from designing a better architecture for action.